Micron Technology

-

By 2030, edge AI is projected to dominate total memory demand, far surpassing datacenter use. Even with conservative assumptions, billions of devices running sophisticated AI locally could consume 5–7 EB(exobyytes) of high-bandwidth memory (5–7 million TB), compared with roughly 1 EB in datacenter. Autonomous vehicles alone (not just cars, drones robots, tablets, phones)—potentially 100 million units worldwide—could carry 32–48 GB HBM-equivalent per car, while industrial and service robots, numbering perhaps 50 million, might each need 24–32 GB.

Consumer devices like AR/VR headsets, tablets, and smart home devices add billions more, though individual HBM demand is smaller, collectively accounting for several exabytes.

Annual additions will be substantial: roughly 10–20 million cars and 5–10 million robots per year, each increment consuming hundreds of petabytes of high-speed memory. Even with edge memory adoption tempered by cheaper stacked DRAM and LPDDR alternatives, the rate of growth is staggering, exceeding the production scaling plans of current HBM fabs. I would use the term 'forever constrained'The wedge of demand is steep: memory requirements increase not just linearly with new devices but also with the growing sophistication of AI models, which push per-device memory higher. As a result, planned memory fabrication capacity is unlikely to keep up, creating a structural bottleneck for ubiquitous, high-performance edge AI. Which, if correct would drive prices up for years as demand continues to exceed supply.

This is my take. Whilst today the market worries about the memory party to be over soon and im thinking even MUs planned $200B expansion plans will not be enough. This plan covers 10 years. I will speculate now that by the end of next year this 200B plan becomes $400B or more.

-

Micron Technology’s decision today to repurchase up to $5.4B of its senior notes is a clear positive signal about its financial strength and discipline. Using cash rather than issuing stock shows the company is generating solid cash flow and prioritising long-term balance sheet health over short-term optics.

By reducing higher-interest debt in the 5–6% range, Micron effectively locks in a risk-free return and lowers future interest expenses, which will support margins over time.

-

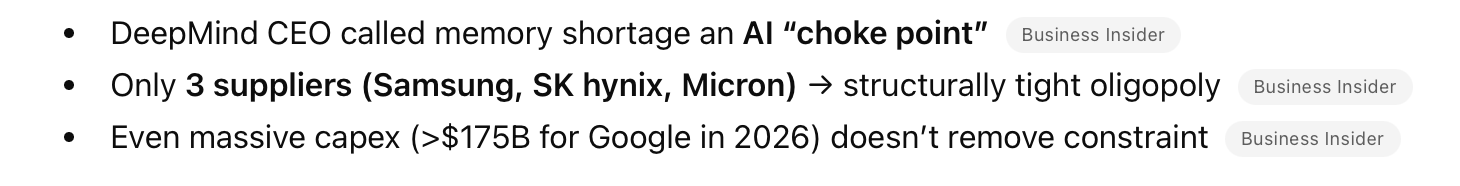

Just seen a report re Google developing chips that need less memory ..

The report indicated that this was behind the drops in Micron this week -

Hi C,

It's an algorithm not a chip and it's a nothing-burger. It has no impact on memory requirements whatsoever and it shows you just how ignorant the participants in the market are.

Google’s “Turbo” narrative is intellectually lazy. The leap from “better AI efficiency” to “less memory demand” ignores how technology adoption actually works. Efficiency lowers costs, which expands usage—basic economics. Dumping Micron Technology on that headline assumes AI growth is fragile and linear, when it’s explosive and compounding. It’s a textbook case of headline-chasing algos and shallow thinking masquerading as insight. No serious analysis, no nuance—just reflexive selling. If this is the market’s level of reasoning, it’s not pricing risk; it’s broadcasting confusion.

And if you want to get technical....

First, the “post rack-scale GPU” reality: once you’re deploying clusters at that level, memory bandwidth and capacity (HBM, interconnect efficiency, etc.) are hard constraints, not optional luxuries.

Software improvements don’t remove that—they just let you push the hardware harder. That typically increases utilisation, not reduces demand.

Second, the token growth point is the killer. If total tokens processed have exploded ~2500×, then a 6× efficiency gain is statistical noise. You’re still looking at orders-of-magnitude net growth in compute and memory demand. The denominator is moving way faster than the numerator.

Third, these optimisations aren’t new. Google and others have been shipping incremental efficiency gains for years—compiler improvements, sparsity tricks, better routing, quantisation, etc. The so-called “Turbo” angle isn’t some step-function event; it’s part of a continuous curve.

So the sell-off in Micron Technology assumes:

efficiency gains suddenly matter more than demand growth

and that this time is different from every prior cycle

That’s a weak assumption. In practice, efficiency gains + exploding demand = more total infrastructure, not less.Micron is so worried it's just about to buy another plant and repurpose it( 4 million sq feet) to accelerate its roadmap. And customers are signing 5 year supply agreements, scrambling to secure their supply chain, including Google who is a major customer. Rather than listen to the FUD look at the evidence. The company can only supply 50% of orders and > 80% margins and that imbalance is getting worse. It also completely ignores the edge device market which will be orders of magnitude bigger than data centre.

It's like the Deep-Seek moment we dont need these GPUs...oh wait.

-

Thanks Adam ….as always appreciate your insights

-

and right on cue. Morgan Stanley today said ...

Morgan Stanley, in a client note reiterated their over weight rating and $520 price target on MU.

-

They must be on this Forum

-

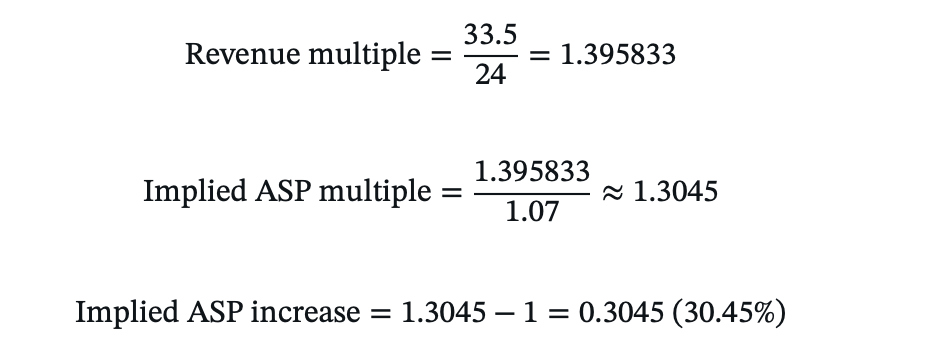

Micron’s “monster” Q3 guidance, issued in mid-March 2026, projected revenue of approximately $33.5 billion with an 81% gross margin. At the time, this was beyond strong. The guide is the strongest growth in corporate history-but it just got even better.

Analysts and consensus models likely incorporated more conservative server DRAM contract price assumptions of around 10–15% QoQ for Q2 2026 (April–June calendar, microns quarter 3 covers March through May). Why, because Trendforce provide market data on what customers are paying/bidding.

Yesterday TrendForce revisions have dramatically upgraded that outlook to roughly +45% QoQ for server DRAM prices. This meaningful positive surprise implies higher average selling prices (ASPs) than previously modelled, particularly as new contracts roll into Micron’s fiscal Q3/Q4 2026 and Q1 2027.The impact could be substantial: elevated server and HBM pricing would lift revenue beyond current forecasts while expanding already-record margins further, thanks to the favourable product mix and limited near-term supply growth. Operating expenses are largely fixed whether they deliver $33B or $40B for that matter.

Modelling that ASP change we are looking at $38B revenue and $25 eps. This is one quarter not a year with a stock now at $357!

Worst case scenario-prices stabilise, best case they keep rising for the next year. They aren't going to fall and will likely rise a bit more but if we model flat ASP and just look at MUs bit growth of 25% thats a base of $100 EPS for 12 months and a 25% growth rate going fwd. With a PE of about 3.

-

Official Nr's on memory for the April-June Q

Q2 2026

Quarter on Quarter(not annual changes) ASP-

PC DRAM prices: revised up from +10~15% to +40~45%

-

Server DRAM prices: revised up from +10~15% to +43~48% The big one

-

Mobile DRAM (LP5X) prices: revised up from +13~18% to +58~63%

-

eSSD prices: revised up from +15~20% to +68~73% The big one

-

TLC/QLC NAND prices: revised up from +15~20% to +60~65% The big one

-

Overall NAND Blended ASP: revised up from +18~23% to +70~75%

so when I said +45% above- it looks like it's actually a lot more-nice!

Looking at last Q, $24B and a $33.5B guide. We know bit growth is limited to +7% QoQ maybe a bit more but that is still a lot so to get to 33.5B we can infer the assumed ASP rise built into their model notwithstanding any change in mix.

Given prices are up a lot more than 30.45% a massive beat is a given. It wouldn't surprise me if they report close to $40B this quarter and $24-$25 eps. Last years Q3 revenue was $9.7B. $1.62 eps.

-

-

Micron taking a bit of a bloody nose again today ….

-

It's frustrating for sure, C. However it's the same pattern of behaviour with 'AI' at the moment-disbelief.

What you have here is the business and its management (at the coal face) taking new business, signing unprecedented 3-5 year supply deals, committing vast sums in Capex and now doubling down on that investment. Two camps, believers and non believers. There is also a lot of foul play and FUD.

Everyone is forgetting that GOOG themselves is one of the biggest 'memory' buyers and they have the biggest 2026 Capex ($175B)-they too are constrained by memory.I noticed yesterday that Nvidia is now trading at multiples below the S&P average yet its growth rate is the highest. When you look at the situation, holding stocks that are thriving but beaten down it's quite clear when the fear lifts we will see a profound correction.

I think the market is wrong and not just slightly so.

-

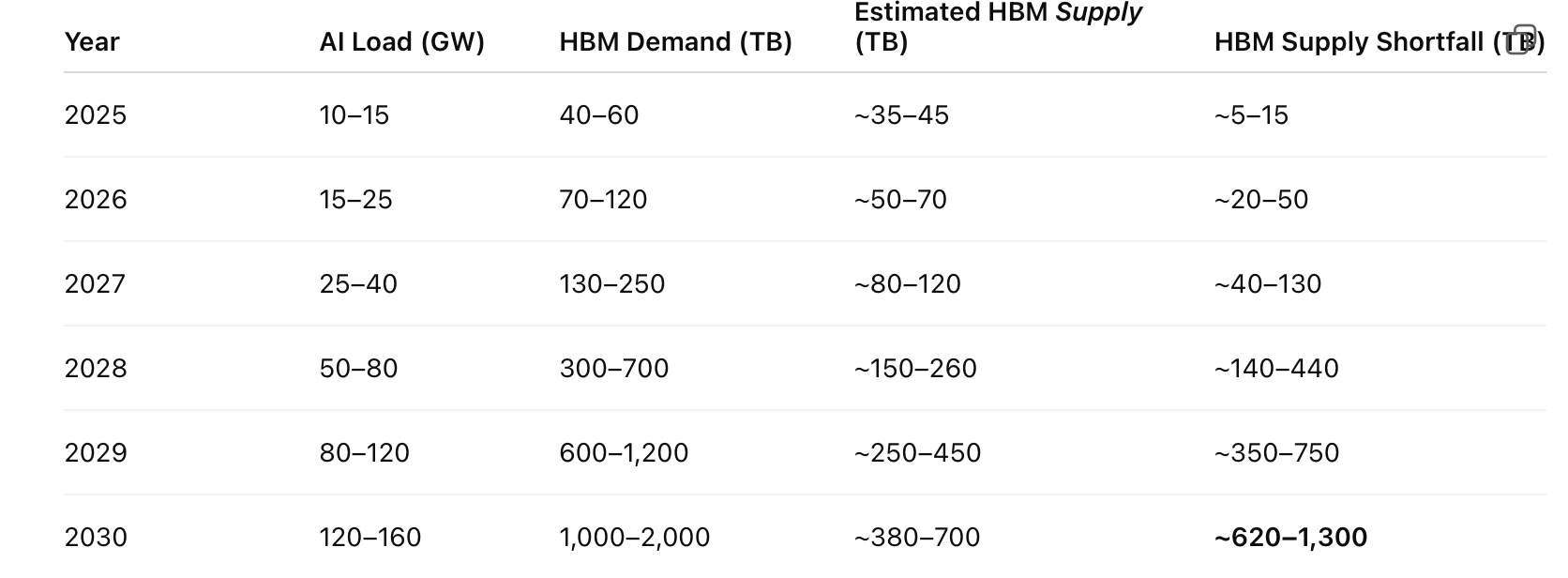

This is based on known committed data centre alone. It does not include edge cases. This additional annual GW (the only smart way to look at the build out). And yes 2025 was a big year but compare 15GW of built to 25 in 2026 and 40 in 2027.

Reiterating-algo's and compression make no difference to the HBM required at the server(hardware) level. It just makes inference cheaper. As it gets cheaper, consumption increases.

Shortage is measured is millions of TB. 2025 up to 50M TB shortage and growing to 1 billion TB shortage in 2030. Or looking at it another way 2026 demand is 120M TB but the shortage could approach 1B TB in 5 years. Even if wrong by a huge margin, demand exceeds supply. This is based on all Capex committed today-even if Capex increases next year and beyond, it will have minimal impact on the demand supply landscape to 2030 due to build duration.

-

I’m sure you’ve seen the note re Citi noting that DRAM spot prices have dropped by 6%. …. But they still give a higher price than today of $425 from $510

-

I have ;). Spot prices havnt fallen-oh 0.26%. Big firms hug the stock price. They never want to be an outlier. Im not aligned to 510 either

.

. -

These so called professional people etc …are never held accountable for what they write etc …

-

it's more a case of knowing how analysts work in reality. There is now supposed to be a 'Chinese wall' between sales and research. Is there in reality. Compliance wise yes(the appearance) but does it work?

What you find is big companies, the mega caps are always raising money, buying other businesses, planning etc and guess who they use. Citi, JPM, MS, Goldman. It's a billion dollar business. They court the companies/management so there is potential for conflicts of interest. It would be very rare for a big bank to underwrite a new capital raise and then have one of their analysts write an opinion rating the stock a SELL. It's bad for business. So, be very wary of analysts opinions. Further, you won't ever see a target too far from the spot price. If company X had a price of $100 an analyst might think it's worth $300 but there is no real benefit in sticking your neck out and stating it.

Wallstreet can be a swamp for the uninitiated. That is why, when it's noisy you sit back and do nothing. And the ME situation is nothing more than a blip in the journey. Yes it's tragic but it's been this way for 5 decades and it will have no lasting impact on what we are investing in.

The Middle East unrest has allowed all the lunatics to spring forth and spout everything imaginable to drive good stocks down, it works, that's why they do it. Things ive read this past week. Helium is running out-TSMC in trouble. Not true, yes Qatar produces a lot but the biggest supplier is the US-no shortage. Nvidia is redesigning Feynman, it's 2 years away and it's just a rumour(and where from). Feynman redisign = less HBM. Not true. Spot prices falling. Not true. Well, citing one website showing 0.29% is not proof of anything. As has been discussed countless times, fear causes irrational behaviour but it also presents opportunities.

We listen to management, after all they know what's going on, and assuming they aren't crooks we can rely on them far more than a guy on X. The biggest hang-over for anything tech related is capital spending. The markets kryptonite (capX). I see the polar opposite. GOOG aren't spending $170B on a hunch, Meta isn't spending $100B on a coin flip.

It won't stop the talking heads spouting the same falsehoods which is basically AI is not making money-that is 100% false. And in any case it's common sense that if you build a trillion dollar piece of infrastructure time is needed to turn that into a profitable business. You either believe in it or you don't. That is the bottom line. We are not blindly barreling down a runway heading for a brick wall with our eyes shut, we are fully engaged, watching, listening and reevaluating, daily. And what I see is the business keeps getting better and lately the values have only become more attractive.

The biggest mistake investors make is looking at the stock price and correlate(solely) that with the companies health.

-

Micron gave a business update today at cantor Fitzgerald.

Iran war has no impact on their business

Their raw material supply chain is in a far better position than their competitors.

Management expect material surplus cashflows to be employed buying back stock in the back end of 2026 (acquisitions and Capex commit current cashflows)-which tells more more to come.

ASPs will increase per bandwidth so memory being reframed as $ per performance not $ per bit. Very interesting and fits with the margin up narrative.Cantors raise price target to $700 based on what they have been told. Nice.

-

Here are the notes from the investor meeting today:

-

Fundamental Shift: From Price-per-Bit to Price-per-Bandwidth. This was the standout insight shared by Micron’s management.The memory industry is moving away from the traditional focus on price-per-bit (how many bits are sold and whether the price per bit rises or falls).

In the AI era, especially with HBM (High Bandwidth Memory) and high-performance DRAM, customers now evaluate memory primarily on price-per-bandwidth — i.e., how much data per second can be delivered to the GPU.

While price-per-bit may rise in 2026, price-per-bandwidth is expected to decline because newer products deliver significantly higher performance.

This allows Micron to increase average selling prices (ASPs) while customers perceive better price-performance and improved total cost of ownership (TCO).

Implication: Stronger pricing power, structurally higher gross margins, and a break from the old cyclical pattern where rising bit supply led to margin collapse. HBM and AI DRAM are now integral to overall GPU system performance rather than just capacity. -

Multi-Year AI Memory Supercycle Cantor Fitzgerald reiterated its Overweight rating on Micron and maintained it as a Top Pick, with a price target of $700.

Strong conviction in a multi-year AI-driven memory supercycle, despite broader market skepticism.

Key drivers include explosive HBM demand linked to NVIDIA platforms (including mass production of HBM4 for the upcoming Vera Rubin GPU)(remember the rumour they were excluded), rising memory content per server, and scaling AI inference workloads (more users and tokens require more bandwidth). Today and transitioning 198-288GB/GPU. Feynman is 1TB per GPU. Demand will grow 2X more than all available supply. -

Supply and Capacity Outlook-Micron’s 2026 capacity is fully sold out.

DRAM supply is expected to remain tight through at least calendar year 2027, with the first meaningful market balance not anticipated until 2028. And speculating, what markets will expand over the next 18-24 months-Robotics, edge cases? I think so!

Micron is well positioned to gain share as a credible dual-source supplier (alongside dominant SK Hynix) for high-value HBM. -

Capital Returns Management expects very aggressive share buybacks to commence in December 2026, supported by strong cash flow generation.

-

Geopolitical and Supply Chain Resilience-No material impact is expected from the war in Iran or related disruptions.

Micron’s supply chain for critical inputs (helium, LNG, and other raw materials) is more domestic/U.S.-centric than that of its Korean competitors, providing a relative advantage.

Overall Tone from Management emphasised the secular and durable(we said this 6 months ago) nature of AI-driven memory demand, evolving customer relationships (including more structured supply agreements), and how its high-value memory roadmaps directly enable more advanced AI capabilities. The company is positioning itself as moving beyond a traditional cyclical memory player toward a key AI infrastructure enabler with sustainably higher margins.(and why not, if Nvidia command 75+ gm so can Micron).

It sounds extremely positive to me. Management will always err on the conservative side but what they are saying is blue sky for 2 years-as far as they can see with everything sold out despite aggressive Capex and growing supply as fast as humanly possible.

The stock is up 20% from yesterday's lows. I wonder how those sellers feel today

-

-

That’s great information and yes nice to see the share price getting back to normal…hopefully a long way up from here