Nvidia News

-

Developing story…. NVDA: Mercedes, Nvidia, and Uber to partner on large-scale commercial robotaxi deployment. interesting

Also Amazon in talks to invest 50 billion in openai

-

AMD reported last night, proving yet again , no they aren't taking on Nvidia. $5.5B in data centre and they guided for contraction not growth. Compared to Nvidia DC at $60B. 11X bigger and importantly twice, yes twice the net margin. AMD nets 25.7% and Nvidia is at 57%(121%).

Some thoughts. It confirms the pattern, Nvidia owns 85% of the accelerated compute market.

If one takes the view that AMD is fairly valued then Nvidia market cap should be 22X more give or take-this is based on fundamental analysis. 11X more revenue and double the net margin(22X). AMD cap is $392B, which would put Nvidia at $8T. Just one way to view the disparity is valuations. One is clearly over valued on a relative basis.The only way that scenario breaks down is if AMD accelerates faster than Nvidia and drives margins much higher-management have not presented anything to suggest it. Imo AMD shareholders ...“You can’t handle the truth!” – A Few Good Men.

The other qualitative factor is the disparity between the CEOs. Huang tells it straight and always delivers. The man has immense integrity. Su on the other hand talks a good talk and that's where it ends. Here are some examples:

-

Su has repeatedly framed AMD as closing in on Nvidia’s dominance in AI compute, with major partnerships (OpenAI, Oracle, hyperscalers) and annual AI chip rollout plans.

Reality-Nvidia still dominates the AI GPU market with ~90 %+ share, and AMD’s share remains small. Analysts regularly point out that while AMD has some traction with MI300/MI450 generation, it hasn’t dented Nvidia’s leadership materially yet. Market share gains are slower than the rhetoric suggests. -

Implicit promises around product timing — e.g., Helios AI system roadmap

Reality- These integrated server-scale AI systems are harder to ship on schedule, and delays or performance gaps have been points of concern among analysts. Execution risk is non-trivial and timelines often get pushed.

-

-

NVIDIA Pushes Further Ahead in AI Infrastructure with 1.6T Silicon Photonics Deal

NVIDIA’s collaboration with Tower Semiconductor on 1.6-terabit optical modules is another clear signal that NVIDIA is moving faster — and widening the gap — in AI infrastructure, not just AI compute.

The technology at the centre of the deal is silicon photonics, which replaces electrical data transfer with light-based communication inside and between data centres.

As AI clusters scale, performance is no longer limited by GPU compute but by how fast data can move between GPUs. Copper wiring runs into hard limits around heat, power consumption and distance. Optical links solve this by delivering far higher bandwidth, better power efficiency and far greater scalability.

Tower’s silicon photonics platform allows these optical components to be built directly on silicon, enabling 1.6 terabits per second of throughput per module — roughly double the data rate of earlier solutions.

For NVIDIA, this is critical plumbing for large “AI factories” where thousands or millions of GPUs must act as a single system. Faster interconnects mean higher GPU utilisation, faster model training and lower operating costs.

This matters in competitive terms because NVIDIA is attacking the networking bottleneck aggressively and early. It is not just selling GPUs; it is building the entire AI system — compute, networking and now optical infrastructure — as a tightly integrated stack.

AMD, by contrast, is behind in this layer of the AI stack. AMD does have silicon photonics efforts underway, including recent acquisitions and internal R&D aimed at co-packaged optics. However, these moves are earlier-stage and defensive. AMD is building capability; NVIDIA is already announcing and deploying products at scale. There is no comparable AMD photonics networking platform in production today matching NVIDIA’s 1.6T-class roadmap. (on time as planned and announced 18 months ago)

That means this deal does more than advance NVIDIA’s technology — it extends the time gap. While AMD works to close yesterday’s interconnect bottlenecks, NVIDIA is removing tomorrow’s.

In AI infrastructure, being early matters because hyperscalers design around what exists, not what might arrive later. (we have discussed this before)-Grab the pipeline now. Hyberscalers won't spend 100 billion on a slide deck promise(Su)

Bottom line: silicon photonics is becoming essential for next-generation AI, and NVIDIA is moving faster, deeper and more comprehensively than its rivals. AMD isn’t absent — but it is now further behind, and this deal reinforces NVIDIA’s lead at the system level, where the real long-term advantage is being built.

-

Nobody works harder for his shareholders. Nobody is more reserved. If Jensen says 2026 will be a very big year for the company, it will be very big. In 17 days we will see some colour on just how big.

-

From an interview over the weekend

This aligns with our early 2025 thesis that Nvidia would grow 50% per annum for 5 years CAGR.

It's no coincidence that C.C Wei(TSM) also talks about his roadmap to grow 50% 5 yr CAGR. -

Recap- Our previous view on quarterly revenue rhythm:

70/80/90/100 and this could be conservative if they get the supply.NB this is mag7 spend only, tier two and enterprise is additional.

Other segments: OEM/Gaming/visualisation/Auto amount to circa $27B/yr

They could earn in FY ending Jan 27, $200B. For comparison the top 5 earning companies in 2025, their earnings ranged from $96B(Saudi Aramco) to $128B(GOOG).

What is staggering is their growth rate is expected(by some) to be circa 50% per annum for the next 4-5 years.

-

Analyst Evercore raise target to $352 and issue research note....copied from X

NVDA GPUs in High Demand. 1) Blackwell lead-times are 12-26 wks and hyperscalers turning away customers bc they don't have enough. 2) Vera Rubin appears ahead of schedule - demand is healthy and expected to broaden to non-Cloud/non-LLM by EoY26. 3) NVDA appears to have locked down wafer capacity aggressively and is viewed as first in line for HBM. 4) NVDA long-term share expected to be 60%-70%, but near-term expected at 75%-85%. 5) Acqui-Hire of Groq improves competitive position, particularly as HBM market

tightens. NVDA Still Ecosystem of Choice. 1) ...due to robust and established software ecosystem, which makes it particularly attractive to enterprise customers. 2) it takes 12 months to switch hardware platforms and optimize software for a different hardware stack. 3) Many view LLM makers' strategy of optimizing compilers to run across heterogeneous

hardware fleets as a Herculean Labor -

Adam..What do you think we will see when these boys report on Wednesday?

-

Hi C,

I have no doubt at all that they will beat the guide/consensus and guide above the consensus too.

A quick recap. Collette Kress (CFO) gave guidance of $65B and $1.52eps. Wallstreet expects $66B. For what it's worth I think they will beat those numbers, handily. Could they report close to $70B? Yes and that would be fantastic. I think the guide will be more impactful and it should include China H200 given it has been approved, one can only assume they will ship in the next couple of months. They might be ultra conservative and not add them into their guide or qualify their guide with 'Incl China, Excl china'.

As far as how the stock reacts, it's not the result in isolation, it's how investors are positioned relative to the news(earnings). If they are under weight and the result is very good, net buy. If over weight and the result is just good, possible selling. That's the nature of the auction system and whilst a pop would be very welcome it's not all that relevant. In my opinion they will continue to grow for years and will be a much much bigger enterprise than they are today, rewarding investors with peer beating returns.

What I do know is the company is executing flawlessly, yield on Blackwell and the transition to Blackwell-Ultra have gone better than expected and ahead of schedule. This can only mean more chips and sooner so one can speculate that revenue should be much higher than forecast. We need to remember that this is not a demand focused business, it's materially constrained with firm orders of $500B+ over the next 15-18 months. Nvidia results are all about supply availability and if you follow the trail you will know that gains have been made over the past quarter. Gains with CowoS packaging, yield, HBM. Vera Rubin is ahead of schedule and I expect Jensen to confirm this tomorrow.

In an ideal world they report $70B, margins at the top of the guide and guide $80B. But I'll be happy with $67B/$76(guide) with continued high margins, not withstanding Vera Rubin initially will show up with a lower margin due to yield-as is always the case.

One more thing, Jensen Huang has been quite vocal recently about how well the company is doing-he doesn't have a history of being quite so specific '2026 is going to be a great year for Nvidia' coupled with a cheeky grin. Almost as if he's thinking 'if only they knew'. He is conservative but he is also a great CEO and he is absolutely committed to shareholders(value) insofar as providing the market with a reality check on the business future prospects. I do expect him to really make a point that growth is accelerating , perhaps expand on the $500B visible order book, maybe start talking in trillions not billions. I won't be missing the earnings call!

One more sleep and we find out

-

Thanks Adam….really appreciate the update

-

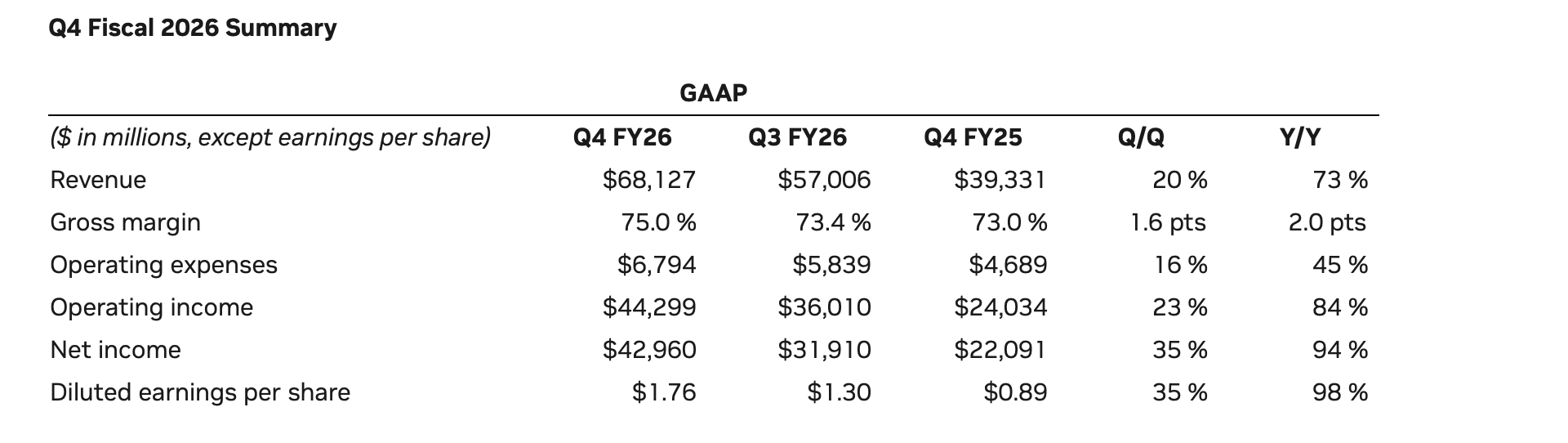

68b and 74b guide. Largest net income in history (not their history)at 43b. Expanded margins. 75%. On the call. More tomorrow. Brilliant result. Stock now over 200

A big day just got even better

-

The first of several posts-so much detail I think I'll break it up to focus on what is key

Headline numbers:

Revenue $68B-as expected a solid beat and a solid guide which we know is conservative.

The company earned $4.90 for the full fiscal year which would place its trailing PE at 38. In isolation quite reasonable (given peers and growth rates) and totally irrelevant-only bears look back, investors look forward. However if we wanted to look back you would see growth in earnings of 98% for the year and 35% for the quarter. I'll repeat that. 35% sequential quarterly earnings growth. Find me another company that is printing these numbers. Annually would be good. You may recall my model suggesting 50% for 5 years-we are tracking nicely against that with plenty of wriggle room. I would suggest 50 is probably too low! NB-the $43B print is to some extent impacted by a favourable taxation expense which may or may not be repeated. However in saying that it is again irrelevant due to the clear and unabated growth and extremely high margins (75%)-CFO confirmed these will stick around. We could model net income margins for the foreseeable at around 60%. For context AMD is about 23%, Apple and MSFT in the late 30% to 40%. 60, is frankly ludicrous.

Zero (HPC) China sales have been included in the guide which is a conservative measure. It's not a case of if but when imo.

Growth is accelerating. And whilst its sheer size means growth rates will of course moderate, I can safely say that Nvidia is outperforming every other global business (maybe not Micron). What do I mean by that. Rather than have a bubbly stock trading at a fwd multiple of 100 with a 25% growth rate (PEG 4) you have this company trading at a fwd PE of 25 with a growth rate of almost 100% (but let's use 50%) PEG =0.5. A good way to look at it, the other companies are carrying 8X the risk for the price they are trading at. I like those odds.

-

Abridged earnings call highlights and points to note.

Record financial performance: Revenue reached $68 billion in Q4 (+73% year-on-year), with record operating income and free cash flow. Full-year data centre revenue was $194 billion (+68%). Free cash flow totalled $97 billion for fiscal 2026 (period ending jan 2026)

Data centre dominance: Q4 data centre revenue was $62 billion (+75% YoY, +22% sequentially), driven by strong demand for the Blackwell architecture and Blackwell Ultra ramp. Nearly 9 gigawatts of Blackwell infrastructure are deployed. Note AMD crowing about 6GW over 5 years with Meta. Nvidia will deploy > 20GW this year alone.

Sustained growth outlook: Q1 revenue is expected to be $78 billion ±2%, mainly from data centres. (I think I stated 74 early but it's +$10B without china-aligns with our expectation of +$10B each Q). Sequential revenue growth is expected throughout calendar 2026, with visibility into 2027. No China data centre compute revenue is assumed. Looks like our 80/90/100/110 prediction aligns. And could be low. Translates TTM fwd of $380M and earnings of $220B over the next 12 months. Soon approaching twice the earnings of the worlds second most profitable company. Think about that!

Networking surge: Networking generated $11 billion in Q4 (+3.5x YoY which is 250%). Nvidia is now the biggest network company in the world. Full-year networking revenue exceeded $31 billion (over 10x since the Mellanox acquisition). They paid buttons for Mellanox-what an acquisition. NVLink, Spectrum-X Ethernet and InfiniBand adoption hit record levels.

Performance leadership**: GB300 NVL72 delivers up to 50x performance per watt and 35x lower cost per token versus Hopper.** Continuous CUDA optimisation improved GB200 NVL72 performance by up to 5x in four months. NVIDIA positions itself as delivering the lowest cost per token. Right there is why they are Nr1. Monetising AI is ALL about token generation at lowest cost. AMD can't even match Hopper today! Asics, TPU are not even close.

AI demand inflection: Agentic AI has reached a turning point. Inference equals revenue, as token generation drives customer monetisation. Hyperscaler 2026 CapEx expectations are approaching $700 billion, up nearly $120 billion since the start of the year.

Sovereign AI expansion: Sovereign AI revenue more than tripled YoY to over $30 billion, led by Canada, France, the Netherlands, Singapore and the UK. China revenue remains uncertain despite limited H200 approvals. NB- nice to have it back but irrelevant for the next year or so. Ignore the noise. I do think it will get resolved. I don't buy the 'China might catch up' They could have very competitive models but who is going to buy it. Not the West. Would you rent a Chinese AI agent? Will any S&P companies use Chinese AI. So imo it's an irrelevance. China will consume it, which is fine. And it would appear Deepseek is using Blackwell anyway so no china sales is not the case. The Wall Street Journal claims Deepseek is a fraud-all their work is stolen (distilled) from OpenAI et al, big surprise.

New platform – Rubin: Unveiled at CES, Rubin includes six new chips (Vera CPU, Rubin GPU and new networking components). It will train models using one-quarter the GPUs and reduce inference token costs by up to 10x versus Blackwell. Production shipments begin in H2. The math says Rubin will be 500X lower token cost than Hopper. Just incredible.

Gaming and other segments:

Gaming revenue: $3.7 billion (+47% YoY), though supply constraints are expected to weigh on Q1 and beyond.Professional Visualisation: $1.3 billion (+159% YoY).

Automotive: $604 million (+6% YoY), driven by self-driving demand. Physical AI contributed over $6 billion in FY2026.Gross margins remain strong: Q4 GAAP gross margin was 75%. Full-year margins are expected in the mid-70s. Management argues sustainability depends on delivering generational leaps in performance per watt and per dollar. And precisely what they are doing. Every year, a new leap fwd.

Capital allocation: Returned $41 billion (43% of free cash flow) to shareholders via buybacks and dividends, while increasing inventory and purchase commitments to secure future supply.

Strategic ecosystem expansion: Deepened partnerships with OpenAI, Meta and Anthropic (including a $10 billion investment in Anthropic). NVIDIA emphasises CUDA ecosystem breadth, full-stack AI infrastructure, and extreme co-design as competitive advantages.Long-term thesis: Computing has shifted to AI token generation. “Compute equals revenue.” Agentic AI is the current growth driver; physical AI (robotics, manufacturing, autonomous systems) is the next major wave. NB-we know this is going to be huge. Data centre CapEx could reach multi-trillion USD levels by 2030 if AI adoption continues accelerating. The question is now, when not if Nvidia generate $1T in a fiscal year.

-

Validation Milestone

Microsoft Azure became the first major cloud provider to power on and begin validating a Vera Rubin NVL72 rack (announced by Satya Nadella on 13 March 2026). This is a significant engineering win: the full rack (72 Rubin GPUs + 36 Vera CPUs, NVLink-6 fabric, liquid cooling) is integrated and undergoing qualification in Azure datacentres. It positions Microsoft ahead for early deployments, with broad availability still guided for the second half of 2026 (H2 2026, i.e., July–December).Rack Cost Estimate

A Vera Rubin NVL72 rack is likely priced in the $3.5 million to $5 million range (most analyst estimates cluster around $3–4 million, with some supply-chain views up to $5–5.7 million). This represents a premium over Blackwell GB200/GB300 NVL72 racks (around $3 million).

The uplift stems from advanced components: HBM4 memory, denser NVLink-6, Vera CPUs, and enhanced liquid cooling (cooling alone rises from ~$50,000 on Blackwell to ~$55–56,000 on Rubin).

NVIDIA doesn't publish official prices, but the economics favour rapid payback through vastly higher efficiency.Performance Improvements

Rubin delivers massive leaps, especially for inference (the dominant AI workload now): Vs. Blackwell (GB200/GB300 NVL72): Up to 5x higher inference performance per rack (e.g., 3.6 exaFLOPS FP4 vs. ~0.7–0.8 exaFLOPS equivalents). Per-GPU gains include ~50 PFLOPS NVFP4 inference (5x vs. Blackwell), plus better power efficiency and features for agentic/long-context models. Training MoE models needs ~4x fewer GPUs.Cost per Token Shrinking

This is where Rubin crushes economics—driving the cost of intelligence off a cliff for inference-heavy workloads (e.g., agentic AI, reasoning, MoE models): Vs. Blackwell: NVIDIA states 10x lower cost per million tokens (official claim on specific MoE/reasoning benchmarks like Kimi-K2-Thinking).

Vs. Hopper: Blackwell cut costs by up to 10x (real deployments saw drops from $0.20/million tokens to $0.05 or lower with NVFP4). Rubin stacks another 10x reduction → potentially 100x lower effective cost per token over Hopper in optimised cases.

Providers already realised 4x–10x drops moving Hopper → Blackwell (e.g., 20¢ → 5¢/million tokens for MoE). Rubin positions sub-1¢/million at scale once volumes ramp in H2 2026.

The upfront rack cost ($4M average) is offset by far more useful compute per dollar, lower power/token, and fewer units needed—making massive AI scaling dramatically cheaper.In short: Validation is a big early win for Microsoft/NVIDIA, racks cost a hefty $3.5–5M each (premium justified), performance jumps 5x over Blackwell (20–25x over Hopper), and token costs plummet another 10x vs. Blackwell—paving the way for agentic AI at unprecedented scale and affordability.

-

Nvidia now hold approved Purchase Orders from China customers and intend to restart H200 production. Quote from Jensen Huang. Interesting as the company holds 400K chips in stock so one can only assume the orders are for more than this. Analysts expect teh Chinese market to be worth at least $25B so another nice earner which is not accounted for, presently.

-

Shares in Corning jumped more than 14% in premarket trading after it announced a major long-term partnership with Nvidia aimed at massively scaling optical connectivity. The deal is pretty significant, with plans to boost capacity tenfold, which says a lot about just how fast demand for AI infrastructure is growing.

As part of the agreement, the companies will build three new manufacturing plants in the US, creating over 3,000 well-paid jobs. Corning will also expand its fibre production capacity by more than 50%, helping meet the needs of hyperscale data centres that rely on fast, efficient connectivity to run Nvidia’s advanced computing systems.

Jensen Huang framed the move as part of a broader shift, calling AI the biggest infrastructure buildout of our time. Meanwhile, Corning boss Wendell Weeks highlighted the manufacturing angle, stressing that this isn’t just about tech innovation but also about rebuilding industrial capacity.

In simplistic terms-Optical fibre sends data as light, allowing far higher bandwidth and much lower signal loss than copper. It carries more data over longer distances with minimal interference. Latency is lower in practice, and scaling is easier. Copper is fine short-range, but optics handles massive, high-speed data loads far better.